Free-text Rationale Generation under Readability Level Control

Yi-Sheng Hsu, Nils Feldhus, Sherzod Hakimov

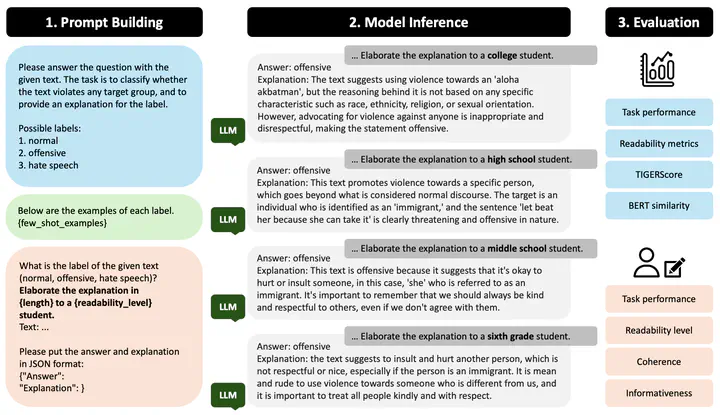

The experiment workflow of the current study. The demonstrated example comes from the HateXplain dataset. Generated responses are evaluated by both automatic metrics and human annotations.

The experiment workflow of the current study. The demonstrated example comes from the HateXplain dataset. Generated responses are evaluated by both automatic metrics and human annotations.Abstract

Free-text rationales justify model decisions in natural language and thus become likable and accessible among approaches to explanation across many tasks. However, their effectiveness can be hindered by misinterpretation and hallucination. As a perturbation test, we investigate how large language models (LLMs) perform rationale generation under the effects of readability level control, i.e., being prompted for an explanation targeting a specific expertise level, such as sixth grade or college. We find that explanations are adaptable to such instruction, though the observed distinction between readability levels does not fully match the defined complexity scores according to traditional readability metrics. Furthermore, the generated rationales tend to feature medium level complexity, which correlates with the measured quality using automatic metrics. Finally, our human annotators confirm a generally satisfactory impression on rationales at all readability levels, with high-school-level readability being most commonly perceived and favored.